PIE prioritisation method

Behind its flimsy facade, this three-letter approach to prioritising a backlog of A/B tests hides an elegant and effective framework for communication, collaboration and consensus-building.

PIE: Deceptively simple

If you’re running more than one or two A/B tests a month, you need a prioritisation method. If you’re working with a team that’s bigger than just you, you need a prioritisation method.

Actually, even if it’s just you, you need a prioritisation method.

Why you need a prioritisation method

Formal prioritisation helps you to avoid waste time and energy on low impact, high effort tests.

It makes sure that you focus on where you can get the best results for the least effort.

The process of prioritisation with a group has the added benefit of building buy-in and investment in the direction of your testing program.

How PIE works

First proposed by Chris Goward at Wider Funnel back in 2011, PIE has since become the defacto standard for A/B test prioritisation.

Optimizely's Program Management tool uses it natively as too, I understand, does VWO's equivalent.

PIE scoring works like this:

You assign each test candidate a score out of ten according to the following criteria:

- Potential - How much improvement can be made?

- Importance - How valuable is the traffic to the pages?

- Ease - How complicated will the test be to implement?

After you’ve scored, you then take the average of the 3.

The number that’s left over?

That’s your PIE score.

Is it scientific? It is not.

Is it useful? It certainly is.

Why PIE sucks

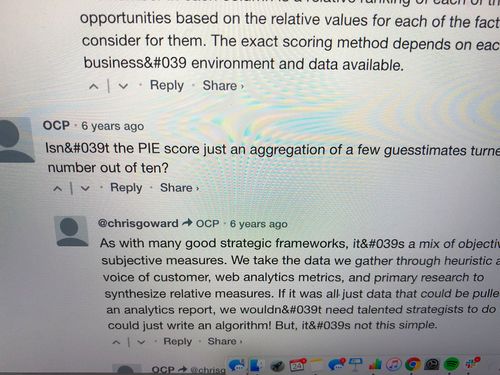

If you scroll down to the comments section at the bottom of the blog post which introduced PIE to the world, you’ll see a comment from one “OCP” (that’s me, Oliver Charles Palmer in anonymous troll mode) basically calling bullshit on the whole endeavour.

Isn’t the PIE score just an aggregation of a few guesstimates turned into a number out of ten?

Me anonymously trolling on the original PIE score blog post on the Wider Funnel blog

To which Chris thoughtfully responds:

As with many good strategic frameworks, it’s a mix of objective and subjective measures ... If it was all just data that could be pulled from an analytics report, we wouldn’t need talented strategists to do it. We could just write an algorithm! But, it’s not this simple.

He is right.

Anonymous troll me is also right, incidentally, but more on that in an a moment.

Surely there's something better?

Now and then, new prioritisation methods crop up.

Here's a handful of the more widely adopted ones:

ICE

Developed by the team at Growth Hackers, ICE is a fairly popular PIE alternative:

- Impact - What will the impact be if this works?

- Confidence - How confident am I that this will work?

- Ease - What is the ease of implementation?

To my mind, ICE is a slightly improved take on PIE.

I've singled out PIE in this post because it is the method that I use (and the first that I came across, for that matter).

That being said, everything here could equally be applied to ICE or probably any other future 3-letter permutations and variations (including my own take on PIE, below).

Modified PIE

In my own work, I actually use a slightly modified version of PIE.

- Priority - How much does this align with our business focus?

- Impact - What will the impact be if this works?

- Ease - How complicated will the test be to implement and analyse?

I wasn't aware of ICE when I made these tweaks, but it has the similarity of replacing 'Importance' with 'Impact'.

I began using this version of PIE with my clients because I found myself regularly struggling to explain the difference between 'Potential' and 'Importance'.

Without thinking about it too much, this version emerged.

I feel like it offers a subtle improvement over the original, even if it uses the same three letters! :-)

PXL

CXL have developed PXL (introduced in a blog post which cites my comment above, no less) which adds umpteen different data points (“Above the fold?” and “Noticeable within 5 seconds?”, etc.) in a spreadsheet and then applies a score to your inputs.

I have never investigated PXL in detail, but my feeling is that this is a vain attempt at making prioritisation ‘more scientific’ and giving the result some sort of objective legitimacy.

I think this is utterly too complicated and maddening and silly and I feel sorry for anyone who's tried to do this on a large backlog in a group environment.

Why PIE rules

Simplicity as a feature

PIE (like ICE) offers three simple data points.

It's not a framework for objective prioritisation.

PIE doesn't bestow any quasi-scientific legitimacy on the prioritisation choices that we make. Instead, in its beautiful simplicity, PIE puts the onus on us to ask the right questions and use the answers to make sensible, informed decisions.

Provides a framework for debate

PIE provides a simple and accessible framework for discussion and debate.

This method comes into its own is where you’re running an optimisation program with a range of stakeholders with competing interests.

If you get everyone involved in the program info a room once a month and sit down and debate the PIE scores for each test, all parties will typically, in my experience, leave comfortable that you've decided on the right tests, for the right reasons.

Zero embedded values

As with others like it, the PXL method is riddled with value judgements.

For example, some of the inputs required to score each test are:

- Is it above the fold?

- Adding or removing content?

- Noticeable within five seconds?

There's a contextual value judgement in each of these which I think needs to be unravelled in each case.

Also: are we still talking about the fold?

It's fast

Because you're inputting just three inputs, prioritisation with PIE doesn't have to be a long and complicated process.

If you ever finish PXLing your backlog, give me a shout and we'll compare notes.

PIE is as good as it gets

As Chris indicates in his comment all those years ago, he was aware that creating a scientific arbiter of impact and effort would be a quixotic struggle.

Instead, PIE gives you a way to focus your intellect and reasoning and facilitate and guide discussion with your stakeholders sensibly and productively.

So yeah, if you’re looking for a magic number-crunching test prioritisation machine, PIE sucks.

For the rest of us, however, it’s an invaluable tool.

Thanks for sharing it, Chris and sorry for trolling you 😉